|

/ X4 B6 s, i5 R2 D

目前除了我们常见的搜索引擎如百度、Google、Sogou、360等搜索引擎之外,还存在其他非常多的搜索引擎,通常这些搜索引擎不仅不会带来流量,因为大量的抓取请求,还会造成主机的CPU和带宽资源浪费,屏蔽方法也很简单,按照下面步骤操作即可,原理就是分析指定UA然后屏蔽。 + y' p/ W+ S, x

宝塔面板下使用方法如下:1、找到文件目录/www/server/nginx/conf文件夹下面,新建一个文件命名:agent_deny.conf 你也可以随意起名,创建完文件后,点击编辑这个文件,把下面的代码放进去保存。

6 ]% P: E8 O A# d (天辰重新收集整理,是为止目前也是最全的,最完善的代码)#禁止Scrapy等工具的抓取

( y! H$ `2 D, n$ I G: @ if ($http_user_agent ~* (Scrapy|Curl|HttpClient|crawl|curb|git|Wtrace)) {- {2 u0 Y6 E/ Q' S9 m1 ^, P' ?( a0 |

& V2 e) G* @$ m- w

return 403;# }0 J7 |# l7 q3 Q) |$ j

) |! a6 N1 Z# e% O5 A8 w& @1 Q r } u) G7 C# O7 C2 {( y/ s

: U, x6 `8 a; g# Z# Q+ N* ~4 D

#禁止指定UA及UA为空的访问; E9 `: Y+ f4 r% E: m& o

if ($http_user_agent ~* "CheckMarkNetwork|Synapse|Nimbostratus-Bot|Dark|scraper|LMAO|Hakai|Gemini|Wappalyzer|masscan|crawler4j|Mappy|Center|eright|aiohttp|MauiBot|Crawler|researchscan|Dispatch|AlphaBot|Census|ips-agent|NetcraftSurveyAgent|ToutiaoSpider|EasyHttp|Iframely|sysscan|fasthttp|muhstik|DeuSu|mstshash|HTTP_Request|ExtLinksBot|package|SafeDNSBot|CPython|SiteExplorer|SSH|MegaIndex|BUbiNG|CCBot|NetTrack|Digincore|aiHitBot|SurdotlyBot|null|SemrushBot|Test|Copied|ltx71|Nmap|DotBot|AdsBot|InetURL|Pcore-HTTP|PocketParser|Wotbox|newspaper|DnyzBot|redback|PiplBot|SMTBot|WinHTTP|Auto Spider 1.0|GrabNet|TurnitinBot|Go-Ahead-Got-It|Download Demon|Go!Zilla|GetWeb!|GetRight|libwww-perl|Cliqzbot|MailChimp|SMTBot|Dataprovider|XoviBot|linkdexbot|SeznamBot|Qwantify|spbot|evc-batch|zgrab|Go-http-client|FeedDemon|Jullo|Feedly|YandexBot|oBot|FlightDeckReports|Linguee Bot|JikeSpider|Indy Library|Alexa Toolbar|AskTbFXTV|AhrefsBot|CrawlDaddy|CoolpadWebkit|Java|UniversalFeedParser|ApacheBench|Microsoft URL Control|Swiftbot|ZmEu|jaunty|Python-urllib|lightDeckReports Bot|YYSpider|DigExt|HttpClient|MJ12bot|EasouSpider|LinkpadBot|Ezooms|^$" ) {) ?3 ` B; R. `# Z3 f

1 ?, H0 H4 P2 k/ L, s/ M4 J/ ]

return 403;4 M. R$ O4 @+ P# k% x7 l p

n7 W5 i8 f: S# j

}

# J) T' b) ^( e0 C# M' e: T% I) D ?8 T: @. s. a+ ]

#禁止非GET|HEAD|POST方式的抓取

R3 k( t$ q3 `# _ if ($request_method !~ ^(GET|HEAD|POST)$) {8 w: K3 e- U+ Q$ \, ]/ Q

+ ^* K/ O, u! F& U; }0 S: s

return 403;

! v- ~2 A' o; h3 _+ e& c* d5 f3 J% E

}7 Q" V. B" e7 c. C3 t/ k

1 V) d- g6 f+ q; ]5 P1 ^) T

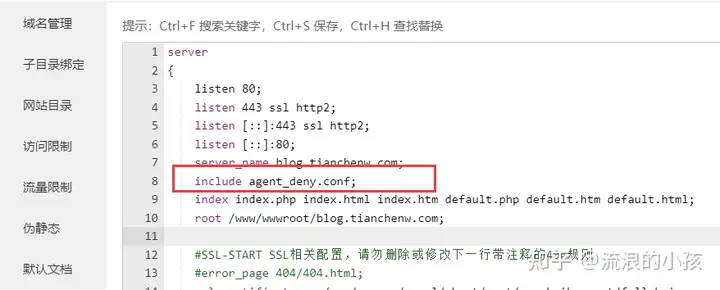

1 V) d- g6 f+ q; ]5 P1 ^) T 2、找到【网站】-【设置】点击左侧 【配置文件】选项卡,在第7-8行左右 插入代码:  - `' A: _6 Q4 [0 S$ K H - `' A: _6 Q4 [0 S$ K H

include agent_deny.conf;2 P9 ~1 r9 N! u& I" K: {! U/ e

添加完毕后保存,重启nginx即可,这样这些蜘蛛或工具扫描网站的时候就会提示403禁止访问注意:如果你网站使用火车头采集发布,使用以上代码会返回403错误,发布不了的。 # b0 ?& x. T! c2 b; S" l9 ^/ K' ?

如果想使用火车头采集发布,请使用下面的代码:#禁止Scrapy等工具的抓取: d% I' Q% P3 l' d6 p

if ($http_user_agent ~* (Scrapy|Curl|HttpClient|crawl|curb|git|Wtrace)) {, G& E+ H7 H# x

1 d$ w7 o- F8 l; u, u& J9 p7 X" G8 y return 403;0 t6 g0 V2 v5 O: K+ g4 V# l

3 [$ Z9 u6 b- N H q. D( V1 { }

t/ S' S" z. G2 D

* |4 w, D" U$ k0 s3 G #禁止指定UA及UA为空的访问% t! P& {# {9 G" N+ n8 e9 k

if ($http_user_agent ~* "CheckMarkNetwork|Synapse|Nimbostratus-Bot|Dark|scraper|LMAO|Hakai|Gemini|Wappalyzer|masscan|crawler4j|Mappy|Center|eright|aiohttp|MauiBot|Crawler|researchscan|Dispatch|AlphaBot|Census|ips-agent|NetcraftSurveyAgent|ToutiaoSpider|EasyHttp|Iframely|sysscan|fasthttp|muhstik|DeuSu|mstshash|HTTP_Request|ExtLinksBot|package|SafeDNSBot|CPython|SiteExplorer|SSH|MegaIndex|BUbiNG|CCBot|NetTrack|Digincore|aiHitBot|SurdotlyBot|null|SemrushBot|Test|Copied|ltx71|Nmap|DotBot|AdsBot|InetURL|Pcore-HTTP|PocketParser|Wotbox|newspaper|DnyzBot|redback|PiplBot|SMTBot|WinHTTP|Auto Spider 1.0|GrabNet|TurnitinBot|Go-Ahead-Got-It|Download Demon|Go!Zilla|GetWeb!|GetRight|libwww-perl|Cliqzbot|MailChimp|SMTBot|Dataprovider|XoviBot|linkdexbot|SeznamBot|Qwantify|spbot|evc-batch|zgrab|Go-http-client|FeedDemon|Jullo|Feedly|YandexBot|oBot|FlightDeckReports|Linguee Bot|JikeSpider|Indy Library|Alexa Toolbar|AskTbFXTV|AhrefsBot|CrawlDaddy|CoolpadWebkit|Java|UniversalFeedParser|ApacheBench|Microsoft URL Control|Swiftbot|ZmEu|jaunty|Python-urllib|lightDeckReports Bot|YYSpider|DigExt|HttpClient|MJ12bot|EasouSpider|LinkpadBot|Ezooms ) { X+ J; i# n2 b- l" i( Y

$ E, H4 o/ h) g! ~0 L3 y return 403;

8 H! g1 w$ D$ L" {

6 W4 N4 b3 J+ R! J7 Q& ^( j }# y- d; N: d, L* K

) O# c/ c* Z2 V. P+ v" _7 x #禁止非GET|HEAD|POST方式的抓取

2 p }6 s& I. I1 o/ N0 ^; x if ($request_method !~ ^(GET|HEAD|POST)$) {

: S4 S3 o$ E0 F' A. Z

9 X6 [# C R* m. O3 l5 x return 403;- _3 \3 b- h" e

1 q# o: U7 C! @

}

0 R- B1 f' T% C) y8 s( J 设置完了可以用模拟爬去来看看有没有误伤了好蜘蛛,说明:以上屏蔽的蜘蛛名不包括以下常见的6大蜘蛛名:百度蜘蛛:Baiduspider谷歌蜘蛛:Googlebot必应蜘蛛:bingbot搜狗蜘蛛:Sogou web spider 2 F* Y3 `6 K. M. f) I

360蜘蛛:360Spider神马蜘蛛:YisouSpider爬虫常见的User-Agent如下:FeedDemon 内容采集

t& B. ^( R! K7 C$ P7 O+ l8 [- O% { BOT/0.1 (BOT for JCE) sql注入. I4 I$ O, X. a( U1 v" b

CrawlDaddy sql注入' b' M7 B2 b! _# A* m5 x

Java 内容采集! A8 J% ?: [7 b S% D) y; L

Jullo 内容采集$ C- V8 o$ \3 R( E

Feedly 内容采集

# M J; M+ r1 L# e6 e9 K5 B! Y9 Y UniversalFeedParser 内容采集3 J/ C5 x I8 x; U9 g1 ]: a( j; Z

ApacheBench cc攻击器

$ g& o! z% Z2 x- u8 z! `" y Swiftbot 无用爬虫' z5 ~1 g6 E0 u

YandexBot 无用爬虫

- F9 n3 E: ]! P1 P- P& J AhrefsBot 无用爬虫/ |- z% P5 F7 A

jikeSpider 无用爬虫1 v$ A, n, G+ F" T

MJ12bot 无用爬虫0 t6 X% S4 @2 |2 f. f# Z

ZmEu phpmyadmin 漏洞扫描

9 j/ `& Z* p( E$ A WinHttp 采集cc攻击6 [* n' n: Y5 `9 p8 M( |

EasouSpider 无用爬虫( X: Y# a* Q; W

HttpClient tcp攻击

, ~: G7 w! O H* c5 y. @, ^ Microsoft URL Control 扫描, E" c& ~1 X0 C2 S

YYSpider 无用爬虫

4 A4 u+ a. B% ? o! l- K8 o jaunty wordpress爆破扫描器

) }; ~$ H9 i6 w' n. c4 R9 q oBot 无用爬虫, i5 `# u" T0 D9 Y, G

Python-urllib 内容采集

: s& n" S2 K. j h, } Indy Library 扫描

4 G& S: S4 X* n FlightDeckReports Bot 无用爬虫( J0 d# i3 T0 A1 G

Linguee Bot 无用爬虫

8 _# ]; y+ k" l% v4 C 来源:BT宝塔屏蔽垃圾搜索引擎蜘蛛以及采集扫描工具教程

# N$ V8 ?* f9 E" W

- B* [+ d$ W: H) X' N

8 q- e9 S! W4 d8 |6 u/ |# v u" X: S' C6 `

3 {1 e3 d+ n5 D0 u& ~0 A7 i* ?7 Q

|